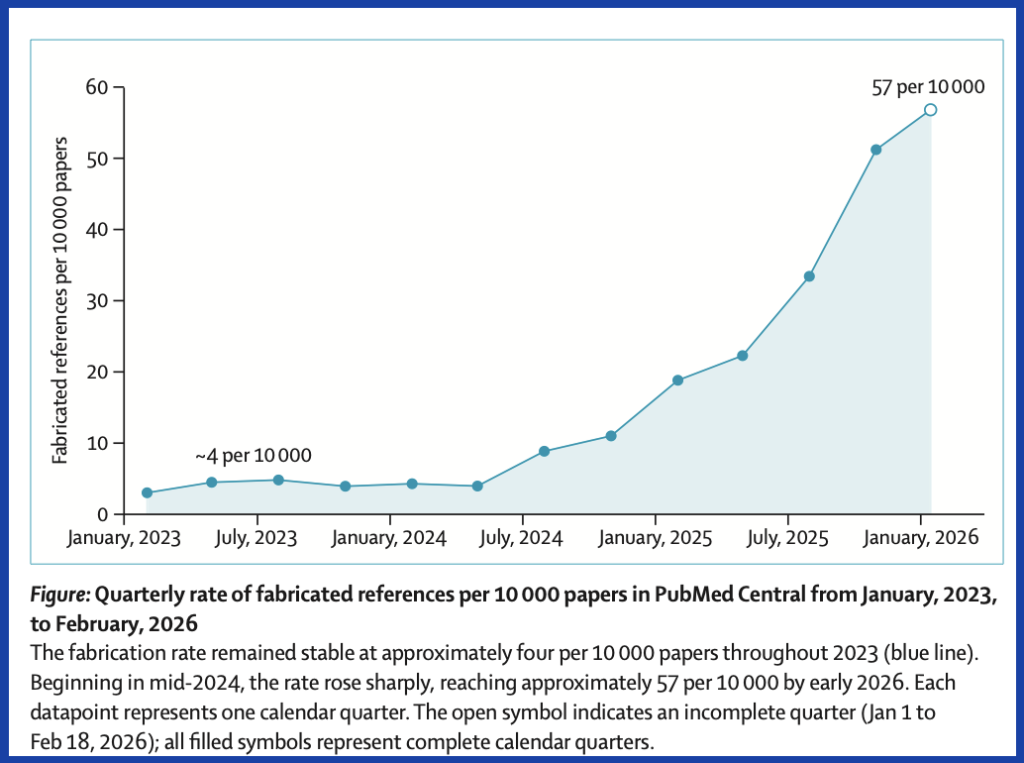

Fabricated citations in the biomedical literature have increased 12-fold in two years, according to an audit of nearly 2.5 million papers published as a letter to The Lancet today.

The analysis of articles indexed in PubMed found that about one in 277 papers published in the first seven weeks of 2026 referenced a paper that didn’t exist. That was a jump from 2025’s rate of one in 458 and 2023’s one in 2,828. The researchers, led by Maxim Topaz of Columbia University’s Data Science Institute, used AI to “distinguish genuine fabrications from formatting discrepancies such as informally abbreviated titles.”

Topaz’s group located the sharpest increase in hallucinated references in mid-2024, which they note coincided with the rise of AI writing tools. The findings come as Nature reported last month that tens of thousands of publications from 2025 “might include invalid references generated by AI.” Retraction Watch has seen its fair share of reports of hallucinated citations generated by LLMs like ChatGPT.

Continue reading One in 277 PubMed-indexed papers in 2026 shows fabricated references, says analysis