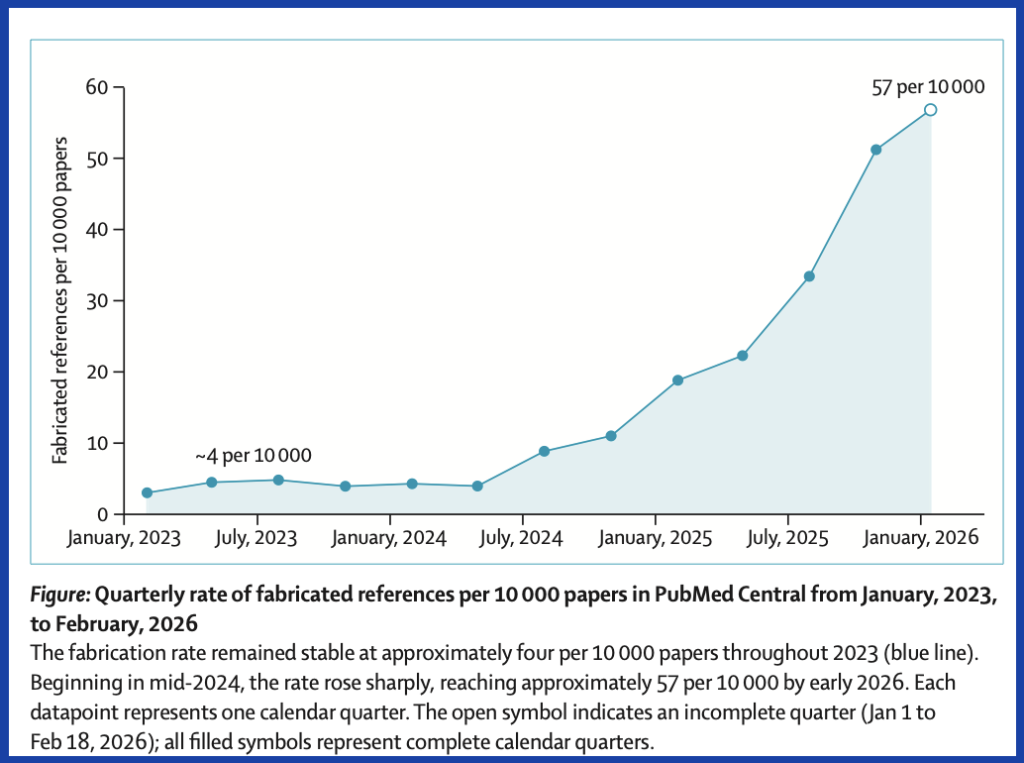

Fabricated citations in the biomedical literature have increased 12-fold in two years, according to an audit of nearly 2.5 million papers published as a letter to The Lancet today.

The analysis of articles indexed in PubMed found that about one in 277 papers published in the first seven weeks of 2026 referenced a paper that didn’t exist. That was a jump from 2025’s rate of one in 458 and 2023’s one in 2,828. The researchers, led by Maxim Topaz of Columbia University’s Data Science Institute, used AI to “distinguish genuine fabrications from formatting discrepancies such as informally abbreviated titles.”

Topaz’s group located the sharpest increase in hallucinated references in mid-2024, which they note coincided with the rise of AI writing tools. The findings come as Nature reported last month that tens of thousands of publications from 2025 “might include invalid references generated by AI.” Retraction Watch has seen its fair share of reports of hallucinated citations generated by LLMs like ChatGPT.

In their sample, Topaz and his colleagues were able to verify 97.1 million references, from which they identified 4,406 “fabricated” references that appeared in a total of 2,810 papers. Based on data from the Retraction Watch Database and elsewhere, nearly all the articles found to have fake references — over 98% — had seen “no publisher action” at the time of the audit in February.

A Taylor & Francis spokesperson told us that publisher is investing in “technology, specialist staff and processes to catch problematic” citations. Articles with concerning references are returned to the author, the spokesperson said, adding, “If these account for more than a small proportion of citations and/or substantially impact the overall integrity of the manuscript, the submission will usually be rejected.”

Renee Hoch, head of publication ethics for PLOS told us they are “exploring options for system-wide reference integrity screening.” Hoch also said PLOS doesn’t automatically classify fabricated references as misconduct: “Research misconduct has a specific definition that includes an element of intent, and whether an issue qualifies as research misconduct is addressed at the institutional level, not at the journal or publisher level,” she said.

We also contacted Elsevier, Wiley, Springer Nature, IEEE and Sage, but they did not respond in the short timeframe provided by The Lancet’s embargo.

Publishers need to take fabricated references seriously, Howard Bauchner and Frederick Rivara write in a commentary accompanying the analysis. Bauchner is the former editor of JAMA and Rivara the former editor of JAMA Pediatrics. The two argue that in cases in which a hallucinated reference appears, the paper should be retracted.

Researchers “incur responsibility for the entire content of that paper” when they agree to be authors, Bauchner and Rivara write. “Retraction of these manuscripts might lead to greater scrutiny of references by authors of manuscripts.”

David Resnik, an integrity researcher at the National Institutes of Health, disagrees, telling us the choice of whether a paper with a fabricated citation should be retracted “depends on the role the citation plays in supporting the results of the study.” His view aligns with that of Topaz, who told us an article should be retracted when the hallucinated references are central to the conclusions of the paper. Topaz gave an example from the analysis where 18 of 30 references appear to have been fabricated.

“For papers with one or two fabricated references that are incidental to the main findings, I think correction and transparency may be more proportionate than retraction,” Topaz told us. He noted 91% of the articles in his group’s dataset of problematic papers had only one or two fabricated references, many of which “are likely honest mistakes by authors who used AI tools without verifying the output.”

Ella Flemyng, the head of editorial policy and research integrity at Cochrane, called the new study’s findings “serious” but had concerns about it. “Though the approach [using AI] was validated on 500 records and the main limitations are discussed, we are lacking considerable details about the methods,” she said.

She also noted because the conclusions rely on an AI-assisted audit, “confidence in the findings depends less on the headline number and more on: how the AI system was designed and validated; how errors were assessed and corrected; and how reproducible and transparent the overall process is.”

Mohammad Hosseini, who studies AI ethics and research integrity at Northwestern University’s Feinberg School of Medicine, called The Lancet analysis “simplistic.” In a March paper, Hosseini and Resnik made a point of distinguishing between hallucinated citations that matter to a paper’s scientific conclusions and those that do not. Topaz’s group didn’t differentiate scientifically critical references – which effectively function as data – from those that were relatively less important, Hosseini said.

Hosseini told us the study represents “low-hanging fruit” and the “tip of the iceberg.” He said the “bigger and more important problem” remains citations generated by AI that aren’t wholly hallucinated but are inaccurate, biased or incomplete. “We are far from being able to even detect them or do anything about them,” he said.

Flemyng had a similar perspective, telling us that, along with addressing individual instances with fabricated citations, “we also need to highlight the pressures in academia that create a perverse incentive for fast science; a researcher needs more publications, more citations, and it doesn’t surprise me that corners are being cut and outputs are not fully verified.”

Hosseini and Resnik wrote in their paper AI-fabricated citations are likely to persist because hallucinating is “inextricably linked to how LLMs operate.”

Whether or not LLMs are expected to stop hallucinating, Topaz told us, the “damage is already done.” The “contamination” of over 4,000 fabricated references his team found “does not go away when the AI gets better,” he said.

Hallucinated references have lately been drawing much attention from sleuths, research misconduct investigators and journalists. Late last year, we reported a World Bank paper on obesity trends contained at least 14 fake references. In March, we wrote about a librarian who discovered 12 of 14 references in a Springer Nature article on bowel surgery management did not exist. Our cofounder Ivan Oransky was the named author of a hallucinated reference on a paper in a Springer Nature journal last year.

On an interactive website about their research, Topaz and his colleagues report on one publisher that “produced fabrications at more than fourteen times the rate of the most selective journals in the dataset.” The publishers with the highest rates of fake references remain unnamed at the site because a “raw comparison of publisher-level rates would be misleading without adjusting for the volume and type of papers each publisher indexes in PubMed,” Topaz told us. He declined to identify the publishers’ individual rates.

“What I can say is that the concentration is disproportionately among large open access journals and publishers, which is consistent with what others have observed about where papermill activity and less rigorous peer review tend to cluster,” he said.

Topaz and his coauthors recommend a series of actions to deal with what they see as the growing problem. First, they suggest fighting AI with AI: “publishers should integrate automated reference verification into submission workflows before peer review begins.” They also want to see article-indexing services add integrity metadata so flags travel with references, and they want to see fake references tracked in research integrity databases. Finally, they say, “publishers should retroactively screen existing publications and issue corrections or retractions when fabricated references compromise a paper’s conclusions.”

The research team is particularly concerned about review articles, which they note “had a fabrication rate that was 57% higher than other paper types.”

Flemyng shares that worry. “In this new age of AI, the need for full systematic reviews that meet the expected standards is paramount. To risk introducing biased, unsystematic AI slop into the literature would be a serious step backwards,” she said.

Like Retraction Watch? You can make a tax-deductible contribution to support our work, follow us on X or Bluesky, like us on Facebook, follow us on LinkedIn, add us to your RSS reader, or subscribe to our daily digest. If you find a retraction that’s not in our database, you can let us know here. For comments or feedback, email us at [email protected].

I can’t believe that people argue that using “AI tools without verifying the output” should be considered as “honest mistake” rather than scientific misconduct. From my point of view, every paper with a fabricated reference must be retracted or rejected during peer-review.

Mindblowing!

It depends. Most of the fabricated references are AI-driven and, as such, we can kind of infer the degree of intent on the part of the author. I agree that the label ‘honest mistake’ is way too generous in most of these cases. After all, failure to confirm the authenticity of your citations borders on reckless behavior (of course, there is also the notion of citation amnesia [failure to cite papers that go against an author’s position], a far more serious and quite common trespass IMO).

But whether papers should be retracted because of fabricated citations, shouldn’t that depend on the role that such citations play in the paper? For instance, citations that play a minor role (e.g., placing the reported study within the context of existing literature; serving a rhetorical function) and which can be withdrawn without affecting the argument made or the validity of the results can be addressed in a correction that is transparent with respect to the authors’ lapse in vigilance. On the other hand, fake citations that play key roles representing part of the ‘backbone’ of a paper (e.g., justification for the hypothesis, support of obtained results/author’s position) are a different matter and in such cases perhaps a retraction should be considered.

I agree.

Heaven forbid the publishers responsible for this actually do their jobs as gatekeepers of knowledge distribution and check the references before just shoving the PDF on a website and pocketing the APC. I guess when you’re raking in 40% profit margins there are more important things to think about rather than ensuring your core product has integrity.

100%, this would be easy to resolve at the journal level. Legitimate papers will have a permalink to PubMed or the like. Authors need to add the links. Journal needs to filter on domain to see that it’s legitimate, and if not, or the link doesn’t resolve, then you’ve got a fabricated reference.

“David Resnik, an integrity researcher at the National Institutes of Health, disagrees, telling us the choice of whether a paper with a fabricated citation should be retracted “depends on the role the citation plays in supporting the results of the study.’”

This claim, like some also in the comments, is complete nonsense. Fabricated citations are black and white evidence that the author(s) have neither read the materials cited nor written the paper in question. I have zero trust for any such paper. Because I encounter these on weekly basis nowadays when peer reviewing, I have already a good collection of names I do not trust any more either. And, indeed, some have already suggested that we should impose bans upon these people (e.g., not allowed to submit any more to a venue for five years).

Please, let’s be a bit more realistic about these matters. Have you not come across instances in which genuine papers were used as citations in support of a claim that actually did not support the claim? Or how about instances in which papers were obviously cited inappropriately because they were based on a seemingly cursory reading of an abstract rather than a reading of the actual paper which may clearly indicate serious methodological flaws? And, again, what about the quite common problem of citation amnesia, a very pernicious practice which, in addition to often intentionally distorting the status of the evidence, also denies acknowledgement to those whose work is being suppressed? Should ALL of these instances automatically lead to retractions?

What? Are you really arguing for some “understanding” about fabrication, a scientific misconduct par excellence? I nowadays encounter hallucinated authors, paper titles, and, yes, even DOIs. And as some editors, journals, and publishers do not seem to care, yes, I maintain my own blacklists. And these include also journals. If you keep sending me slop for review, I have little trust toward you as an editor, a journal, or a publisher.

I was pointing out that, in addition to ‘hallucinated citations’, other frequent forms of citation misconduct, some quite serious IMO, have been around for decades (e.g., intentionally failing to cite relevant work, https://www.the-scientist.com/citation-amnesia-the-results-44065) and questioned whether papers with other types of citation misconduct should be similarly treated with a blanket retraction. I certainly agree that in _some_ such cases, particularly those in which highly relevant literature is intentionally omitted, the papers should, indeed, be retracted. But more to the point, I was agreeing with David Resnik’s point that it “depends on the role the citation plays in supporting the results of the study”. In other words, each case should be judged on its own demerits.

Yes, of course they should all be retracted. The fact that they aren’t is an indictment of the currently prevailing standards of academic and journal integrity.

“What I can say is that the concentration is disproportionately among large open access journals and publishers, which is consistent with what others have observed about where papermill activity and less rigorous peer review tend to cluster,” he said.

Maybe this is not so surprising since they only investigated open access articles.

The usage of DOIs in references and the control for consistency of metadata and tje existence of the cited articles might improve the situation.

Ironically, the Lancet article itself doesn’t include DOIs in the references: neither on the Lancet webpage: https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(26)00603-3/fulltext nor in the freely available PDF of the article: https://www.thelancet.com/action/showPdf?pii=S0140-6736%2826%2900603-3.

The Lancet webpage only provides Google Scholar links to the cited references, and acutally two of the ten references (Ref 3 and Ref 6) give “Your search did not match any articles” errors in Google Scholar after clicking on them.

Furthermore Ref 8 is a reference to one of the articles the authors found with fake references, which is maybe not a good idea. Now this low-quality article received one citation, although it was never cited before.

“The usage of DOIs in references …”

Indeed, and it would also save a lot of time for legitimate authors. Crossref and the like already have the meta-data, so why cannot we just have DOIs in a bibliography? It would also save time for reviewers as they could just click links. The same goes for publishers who could just retrieve the meta-data during type-setting if they even want it. Crossref could give waivers for smaller community publishers. For the rare cases without DOIs, ISBNs or URLs would suffice. Problem solved.

I presume that some automated systems (I am going to refrain from calling them “AI”: we have been using computers to assist us in our work for decades) can reveal references which are not in the named journals’ databases. However, before we can refer to these are “fabricated” we also need to ensure that the introduction of a rogue letter, misspellings, or incorrect dates/URLs do not lead to the assumption that the reference is fabricated.

The power of expert peer review is that reviewers both KNOW the literature, and are hopefully curious about those papers cited with which they are not familiar because they are of the discipline.

Scholarship is a human activity, as is quality assurance in scholarship. I don’t know what the “AI” methodology was here, but for thoughtful scholarship, humans should delegate the task of evaluation and certainly not the task of evaluating AI itself.

A fairly frequent “quotation” usually attributed to Einstein but not confirmed as being his words, is useful here. I will take my best stab at proper APA citation for a quotation from an unidentified author. It very well may be wrong, albeit not falsified. In any case, it undermines my skepticism around using AI to detect AI.

“We cannot solve our problems with the same thinking we used to create them.” ([unidentified quotation], n.d.)

The Lancet study’s figure of 4046 fake references is almost certainly a massive undercount due to its reliance on resolvable IDs and the PMC Open Access subset.

To illustrate the ‘dark matter’ of this problem, I found a paywalled, non-PubMed article (published 2025 in Applied Materials Today, https://doi.org/10.1016/j.apmt.2025.102647) that contains at least 9 AI-hallucinated references. Because this is a materials science journal, it is not indexed in PMC or PubMed, placing is outside the Lancet study’s scope. The article is behind paywall, but the article’s metadata including the references are available at Crossref: https://api.crossref.org/works/10.1016/j.apmt.2025.102647

The hallucinations include ‘J. Doe’ and ‘J. Smith’ (common placeholder names) as authors in Ref 88 (and other similar common name pairs with made up article titles in Refs 21, 22, 24, 25, 81, 89, 90, 92) and provide plausible-looking but non-resolvable DOIs which were also outside the scope of the Lancet study (These are the 9 fake DOIs: 10.1016/j.compscitech.2023.109856, 10.1016/j.memsci.2022.01.008, 10.1016/j.mse.2020.05.005, 10.1016/j.porgcoat.2020.105488, 10.1016/j.porgcoat.2021.106066, 10.1016/j.porgcoat.2022.106198, 10.1016/j.porgcoat.2023.106321, 10.1016/j.triboint.2019.07.012, 10.1063/5.0047893). I found this and over 300 other suspicious articles simply by searching for ‘Doe J’ and ‘Smith J’ as authors in the same reference in reference lists.

Conclusions:

1. The 23% (ca 25 million) of references the Lancet authors excluded (because they lacked resolvable IDs) are likely where the most blatant fakes hide. It would be interesting to know the order of magnitude of these.

2. While the Lancet authors found fakes with ‘resolvable’ IDs, those IDs were likely in many cases force-matched by faulty fuzzy-logic algorithms at the publisher or PMC level. As far as I know not many journals require the authors to provide PMIDs for the references, so the resolvable PMIDs for the fake references and even many DOIs are most probably added to the PMC data by the publishers and/or PMC itself not by the authors (or the LLMs they used)

3. It seems that the whole scholarly infrastructure is inadvertently ‘laundering’ AI slop into the record, and the problem of fake references is not exclusive to open access publications and venues.