H. Studd et al/medRxiv 2026

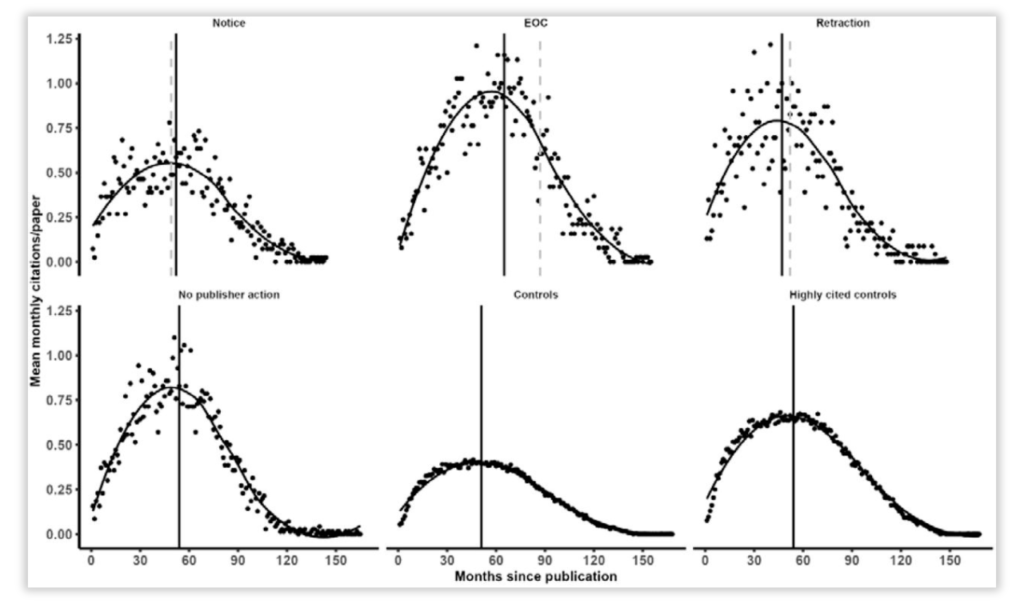

Neither retractions, expressions of concern, nor other editorial notices seem to keep authors from continuing to cite problematic papers, according to a look at what happened to more than 170 articles by one author.

“After the public notification of integrity concerns about an article, it would be expected that other authors would no longer cite the article because it is unreliable,” write the authors of a new preprint. But that’s not what they found in a limited comparative study. Whether the study is generalizable has yet to be seen, says one other expert.

Four sleuths – the University of Aberdeen’s Hugo Studd and Alison Avenell and the University of Auckland’s Andrew Grey and Mark J. Bolland – charted citation data for 172 papers on clinical trials from Zatollah Asemi, a nutrition researcher at Kashan University of Medical Sciences in Iran, whose work has come under scrutiny.

Continue reading Black marks on published papers don’t change citation rates, new study finds